Navigating the AI Risk Landscape: A Deep Dive into AAISM Domain 2

Managing the dynamic AI risk landscape: Continuous assessment, specialized technical threat mitigation, and rigorous supply chain oversight to ensure secure and trustworthy systems.

TRAININGISACA- AAISMAAISM DOMAIN 2

Harshaun Singh

2/24/20263 min read

Navigating the AI Risk Landscape: A Deep Dive into AAISM Domain 2

While Domain 1 focused on the high-level governance and strategy, Domain 2: AI Risk Management moves into the operational heart of the AAISM framework. AI systems are unique because they are designed to learn and change themselves, meaning risk is an inherent, dynamic characteristic rather than a static technical defect that can be patched and forgotten.

To master this domain, we must explore how organizations identify, assess, and mitigate the specific threats introduced by machine learning.

--------------------------------------------------------------------------------

1. Risk Assessment and Treatment: Frameworks for Trust

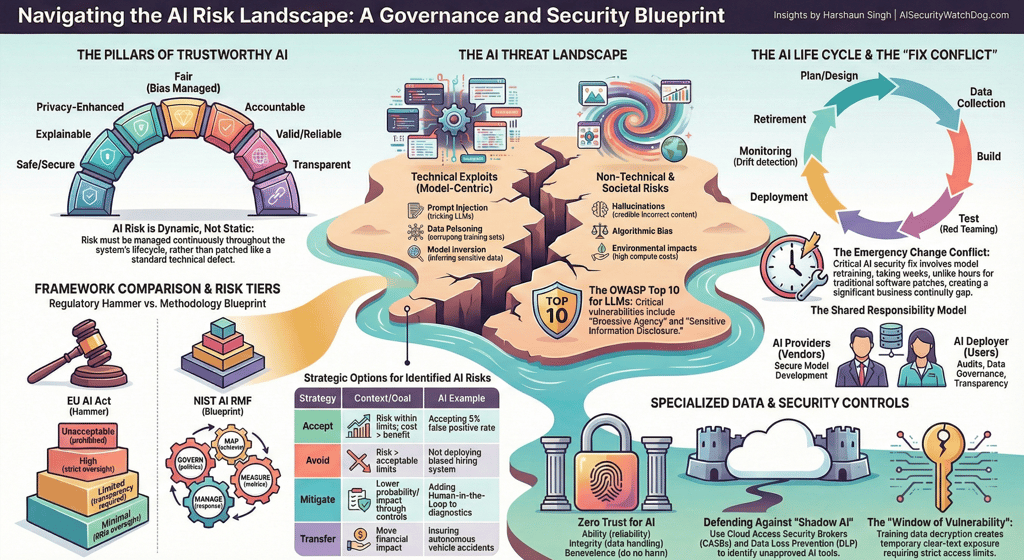

The core goal of an AI risk program is to ensure "Trustworthy AI"—systems that are safe, explainable, privacy-enhanced, and fair. To achieve this, two major philosophies have emerged:

The EU AI Act ("The Regulatory Hammer"): This is a mandatory, legally binding checklist that classifies AI into four risk tiers: Prohibited (e.g., social scoring), High Risk (e.g., critical infrastructure), Limited Risk (e.g., chatbots), and Minimal Risk.

The NIST AI RMF ("The Methodology Blueprint"): This is a voluntary, flexible roadmap centered on four functions: Govern, Map, Measure, and Manage.

Essential Assessments Organizations must conduct specialized reviews, such as the Fundamental Rights Impact Assessment (FRIA) for high-risk systems and the Privacy Impact Assessment (PIA). A critical new concern is "inference-based privacy risk," where an AI deduces sensitive information about an individual that they never actually provided.

Response Strategies Once risks are identified, leaders must choose to Accept, Avoid, Mitigate (often via Human-in-the-Loop oversight), or Transfer the risk through mechanisms like insurance.

--------------------------------------------------------------------------------

2. Threat and Vulnerability Management: Technical & Non-Technical Risks

Securing AI requires safeguarding the logic, data, and integrity of the models themselves, moving beyond traditional network security.

Technical Exploits: The OWASP Top 10 for LLM Applications identifies critical vulnerabilities like Prompt Injection (social engineering the AI using language), Sensitive Information Disclosure, and Excessive Agency. Other major threats include Data Poisoning (corrupting training data) and Model Evasion (modifying inputs to cause misclassification).

Non-Technical Threats: These are often the most damaging because they cannot be fixed with a simple patch. They include Hallucinations (credible-sounding but false info), Bias, and Societal Manipulation through Deepfakes.

AI-Enabled Threats: Adversaries are now using AI to "turbocharge" the cyber kill chain, creating polymorphic malware and highly convincing phishing campaigns.

--------------------------------------------------------------------------------

3. Vendor and Supply Chain Management: The Shared Responsibility Model

AI adoption dramatically expands supply chain risk through a web of data providers, model developers, and cloud hosts.

The Shared Responsibility Model: Even when using a third-party vendor, the AI Deployer (the user) remains accountable for the system's impact. For example, if an airline's vendor-supplied chatbot incorrectly grants a refund, the airline is legally bound by that decision because the AI acts as its authorized agent.

Due Diligence: Vetting must go beyond standard reviews to include data provenance (where the data came from) and the vendor's ability to handle regulatory shifts.

Integration Risks: Organizations must be wary of "Nth-party" risks (the vendor's vendors) and the potential for Intellectual Property (IP) litigation if a model was trained on copyrighted material without a license.

--------------------------------------------------------------------------------

💡 Knowledge Check

1. Which framework is referred to as the "Methodology Blueprint" for AI risk?

Answer: The NIST AI RMF, because it provides a flexible roadmap (Govern, Map, Measure, Manage) rather than a rigid legal checklist.

2. What is "Inference-based Privacy Risk"?

Answer: A risk where an AI system deduces sensitive personal information about an individual that was never explicitly shared in the original data.

3. If a company uses a third-party AI chatbot and it makes a biased or legally binding error, who is ultimately accountable?

Answer: The AI Deployer (the company using the tool). Under the Shared Responsibility Model, the deployer is responsible for the system's output and its impact on end-users.

4. What is the difference between Prompt Injection and Data Poisoning?

Answer: Prompt Injection is a social engineering attack using language to trick a deployed model into ignoring safety rules. Data Poisoning is an attack on the source, inserting malicious data into the training set to corrupt the model's logic before it is even deployed.

5. Name one "Prohibited" use of AI under the EU AI Act.

Answer: Examples include social credit scoring by public authorities or untargeted scraping of facial images from the internet.

WatchDog Wire

Bridging the gap between AI innovation and cybersecurity. Explore our AI Risk Intelligence & Governance Briefs.

AI Security WatchDog

AI Risk Intelligence & Governance Briefs. Weekly insights on AI Incidents, regulations and vendor risks.

Contact

info@AISecurityWatchdog.com

Subscribe

© 2025. All rights reserved.