Mastering AI Governance: A Deep Dive into AAISM Domain 1

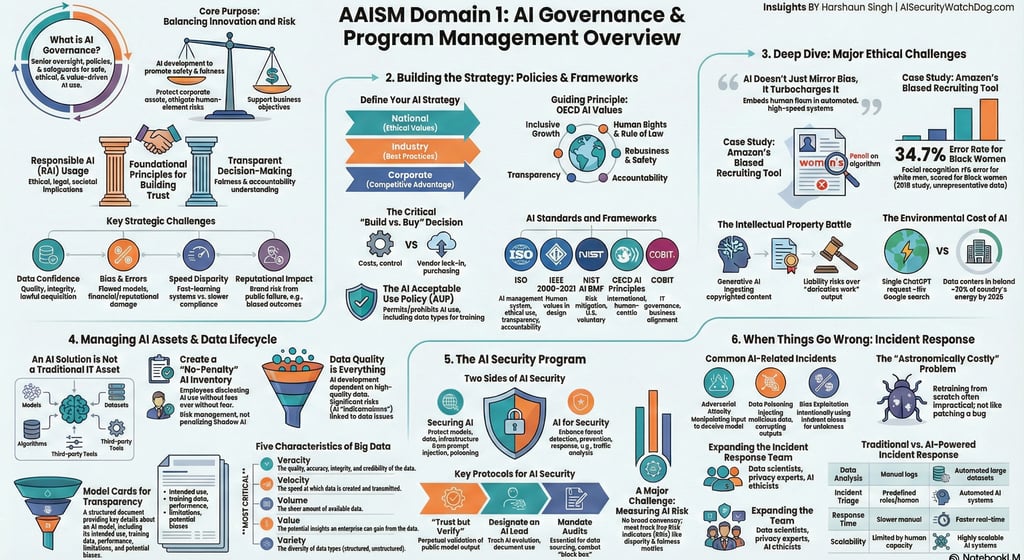

Domain 1 establishes the strategic framework for AI Governance and Program Management, utilizing senior oversight and ethical safeguards to balance innovation with comprehensive risk management.

AAISM DOMAIN 1TRAININGISACA- AAISM

Harshaun Singh

2/24/20264 min read

Mastering AI Governance: A Deep Dive into AAISM Domain 1

In an era where AI promises unprecedented efficiency and competitive advantage, robust governance is not merely a compliance checkbox; it is the core strategy that ensures the value proposition actually happens. AI Governance is the strategic framework that enables an organization to balance innovation with comprehensive risk management, protecting data, reputation, and ethical standards.

Below is a detailed exploration of the five core topics within Domain 1 of the AAISM framework.

--------------------------------------------------------------------------------

1. Stakeholder and Regulatory Considerations

AI Governance extends beyond traditional IT security to address the unique challenges of AI, such as promoting fairness and human rights while mitigating the "human element" of bias.

Organizational Readiness: Before adoption, an organization must assess its AI Readiness, ensuring it has mature governance processes, dedicated committees (like an AI Strategy Committee), and the right talent to make informed decisions.

Roles and Responsibilities: The Enterprise’s Governing Body (Board of Directors) holds ultimate, non-delegable responsibility for AI outcomes. Strategic oversight is often managed by a cross-functional AI Steering Committee composed of senior leaders and domain experts.

The Regulatory Landscape: Organizations must navigate various frameworks. The EU AI Act serves as a "regulatory hammer," a legally binding compliance checklist with high penalties for non-compliance. In contrast, the NIST AI RMF acts as a "methodology blueprint," providing a flexible, voluntary structure for managing risk. Other key standards include ISO/IEC 42001 for AI management systems and the OECD AI Principles.

2. AI Risk Management (Strategy and Policy)

A formal AI strategy and associated policies are the mechanisms that translate high-level governance into day-to-day guidance.

Build vs. Buy: A core strategic decision is whether to develop AI in-house or purchase from a vendor. While On-Premises hosting offers more control, it requires significant resources; Cloud options offer scalability but introduce concerns regarding data sovereignty and vendor lock-in.

The Shared Responsibility Model: Even when using third-party software, the AI Deployer remains responsible for how the system is used and the data it processes.

Ethical Challenges: AI doesn’t just mirror bias; it turbocharges it, making human flaws harder to root out once embedded in automated systems. Beyond bias, organizations must manage Intellectual Property risks regarding training data and the significant Environmental Impact of AI workloads, which consume massive amounts of electricity.

3. AI Technologies and Controls

Effective security begins with a comprehensive inventory and a robust lifecycle management plan for both models and data.

AI Asset Identification: Unlike traditional IT assets, an AI Solution (AIS) is a complex system of interconnected models, datasets, and algorithms. Inventory methods include discovery tools, surveys, and interviews.

Data Quality (The Five Vs): While Velocity, Volume, Value, and Variety are important, Veracity (quality and integrity) is the most critical. If data lacks truthfulness, the model will learn from flawed information, leading to "hallucinations".

Transparency Tools: Model Cards and Data Sheets have emerged as essential for governance, providing structured overviews of a model’s objectives, training data, performance metrics, and known limitations.

4. AI Security Program Management

An AI security program must be a proactive extension of existing frameworks, acknowledging that AI is probabilistic and constantly evolving.

Securing AI vs. AI for Security: Securing AI focuses on protecting the models and training data from attacks like prompt injection. AI for Security leverages AI technologies to enhance traditional processes, such as using AI in SIEM systems or for Behavioral AI (BAI) to detect insider threats.

Governance Integration: Frameworks like COBIT can be tailored to align AI initiatives with business goals and ethical standards.

Metrics for Success: Because there is no broad consensus on measuring AI risk, organizations must track both KPIs (e.g., accuracy, ROI) and KRIs (e.g., disparity and fairness metrics) to identify potential bias.

5. Business Continuity and Incident Response

AI introduces unique challenges to incident response (IR), as the probabilistic nature of models makes detecting a "failure" difficult.

AI-Specific Incidents: IR plans must account for Adversarial Attacks, Data Poisoning, and Model Drift (the natural degradation of performance over time).

The Retraining Conflict: A critical conflict exists in emergency change management. While a traditional software patch takes hours, a corrupted AI model may require retraining, which can take weeks.

Resilience Strategies: Organizations must develop alternative strategies, such as the ability to roll back to an older model version or implementing output sanitization as a temporary "shield" while a long-term fix is developed.

--------------------------------------------------------------------------------

💡 Knowledge Check

1. Who holds the ultimate, non-delegable responsibility for the consequences of an organization's AI systems?

Answer: The Enterprise’s Governing Body (e.g., the Board of Directors).

2. What is the difference between the EU AI Act and the NIST AI RMF philosophies?

Answer: The EU AI Act is a mandatory "regulatory hammer" (legally binding checklist), while the NIST AI RMF is a voluntary "methodology blueprint" (flexible roadmap).

3. Which of the "Five Vs" of Big Data is the primary defense against hallucinations?

Answer: Veracity (quality, accuracy, and integrity).

4. Why is "Emergency Change Management" different for AI than for traditional IT?

Answer: Traditional IT patches take hours, but correcting fundamental AI flaws requires retraining, which can take weeks.

5. What is the "Shared Responsibility Model" in the context of AI?

Answer: While a vendor may provide the model, the AI Deployer remains responsible for how the system is used, the data it processes, and its ultimate impact.

6. What are "Model Cards" used for?

Answer: They provide a transparent overview of a machine learning model, detailing its objectives, training data, capabilities, and limitations.

WatchDog Wire

Bridging the gap between AI innovation and cybersecurity. Explore our AI Risk Intelligence & Governance Briefs.

AI Security WatchDog

AI Risk Intelligence & Governance Briefs. Weekly insights on AI Incidents, regulations and vendor risks.

Contact

info@AISecurityWatchdog.com

Subscribe

© 2025. All rights reserved.